The week before Easter, Joseph Reiser was approached by Simon Webb and John Bradbury of the BBC Philharmonic to help them produce a short piece of music for broadcast over the bank holiday weekend. Daniel Whibley (double bass player, composer and arranger) had created a wonderful arrangement of Easter Parade by Irving Berlin and the plan was to put together a 60+ piece orchestra from home, in an effort to break what is most likely one of the longest silent periods in the orchestra’s history. In this article Joseph explains the challenges of this project and how they were overcome.

A Traditional Recording On Its Head

Recording and mixing an orchestra is not usually an audio-processing-intensive task. It relies on great-sounding instruments played beautifully in a wonderful-sounding room, captured by engineers putting fantastic microphones in exactly the right place. With such a good sound source it’s a job that’s difficult to do very badly, but by the same token is also extremely difficult to do as well as the BBC do day to day in their studio at Salford Media City. The detail and depth of their recordings rely not only on a first-class recording engineer at the helm but also a keen musical ear and knowledge of the pieces to balance the mix.

Sadly, for this project, all of this has been turned upside down and our concert hall has been replaced with a bedroom, our Schoeps MK4 with an iPhone or home recording equipment.

Thankfully we still have great-sounding instruments played beautifully, but all the other steps in this process are lost and will need to be compensated for and recreated in the audio prep and mix process. To alleviate some potential pitfalls during capture, some things can be done:

Use lossless recording apps if using phones/tablets.

Record in the driest/deadest-sounding room available, avoiding rooms with lots of hard reflective surfaces in favour of carpet and soft furnishings.

Place microphones/recording devices close to the instrument to capture as much direct sound as possible.

While far from ideal this should at least give a fighting chance along with some other fortunate aspects.

Power In Numbers And A Helpful Aesthetic

Taking recordings from disparate sound environments of varying quality and combining them in any sort of convincing way to sound truly cohesive can be an insurmountable task, no matter the skills and tools at your disposal. If this project were a string quartet rather than a full orchestra I would be relying on the quality and similarity of capture far more heavily, and if one part contained significantly more reverberance than the rest it would be very difficult create a smooth, homogeneous sound. A sparse arrangement with fewer instruments means we hear much more of the detail of each sound source including all the spatial cues that give away the different recording environments, however with 35 musical parts and 60+ individual players the inconsistencies between recording environments are more easily masked (for example there are eleven first violin players playing one part in unison).

The aesthetic of a traditional orchestral recording also helps here. Listening to recordings of the BBC Philharmonic you can hear the lush reverberant concert halls and specifically a direct/reverb ratio that’s very much on the wet side. Sending tracks that contain a lot of their own individual reverberant energy to the same larger/longer reverb will help mask the inconsistencies between them and a heavily wet direct/reverb ratio will further help to blend these together into the same space.

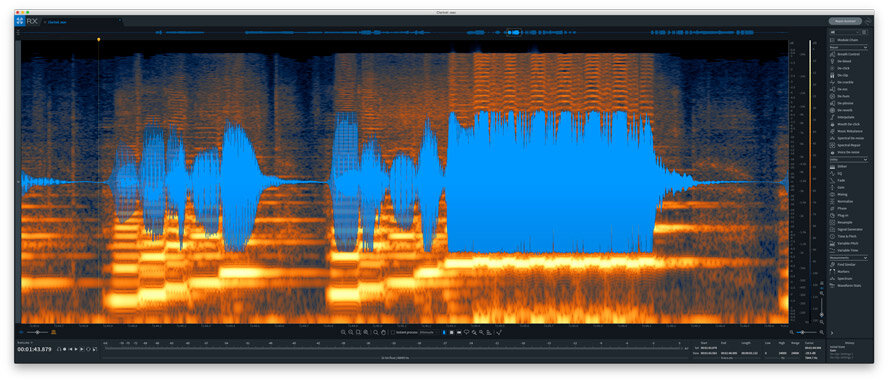

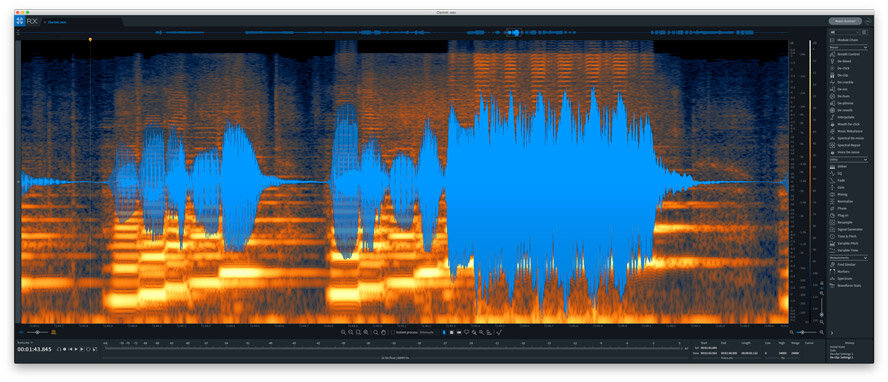

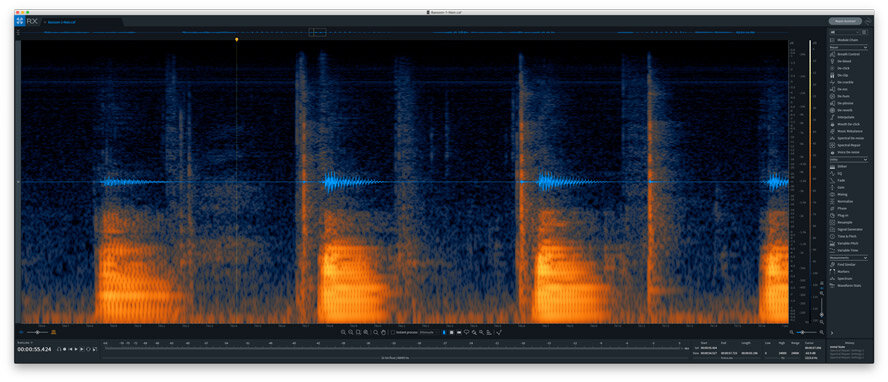

Audio Restoration – iZotope RX To The Rescue

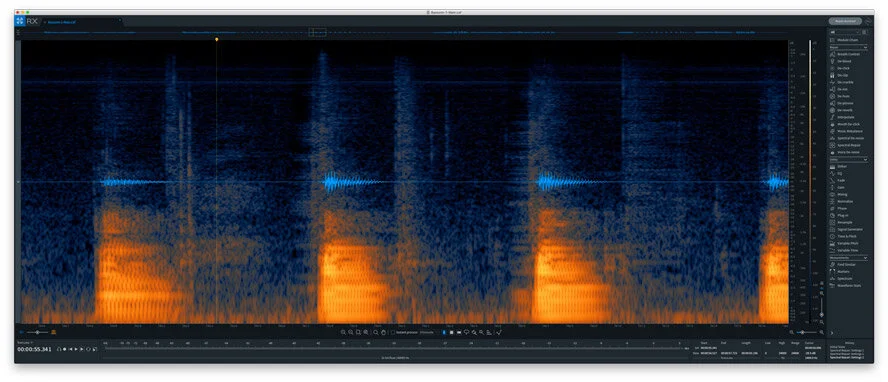

I have to admit that I was surprised at the quality of some of the audio that was recorded on phones or tablets; device microphones have evidently improved dramatically over the past few years, and at the risk of being exiled by the entire audio community, some of the recordings sounded reasonably good. But some didn’t, and others were dire in a multitude of ways from limited frequency response, heavy clipping, and audible noise floor, to extraneous noise from clicking keys and creaking seats.

There are lots of tools available to do this kind of audio repair but the one I know best is iZotope RX. Using a mixture of Spectral Repair, De-clip, and Spectral De-noise, paying close attention to the artifacts these can leave behind, it was possible to remove most of the offending clicks, creaks and clips entirely, leaving much cleaner audio to work with. Where complete removal without noticeable artifacts wasn’t possible, I managed to attenuate these to the point where they would be masked within the mix.

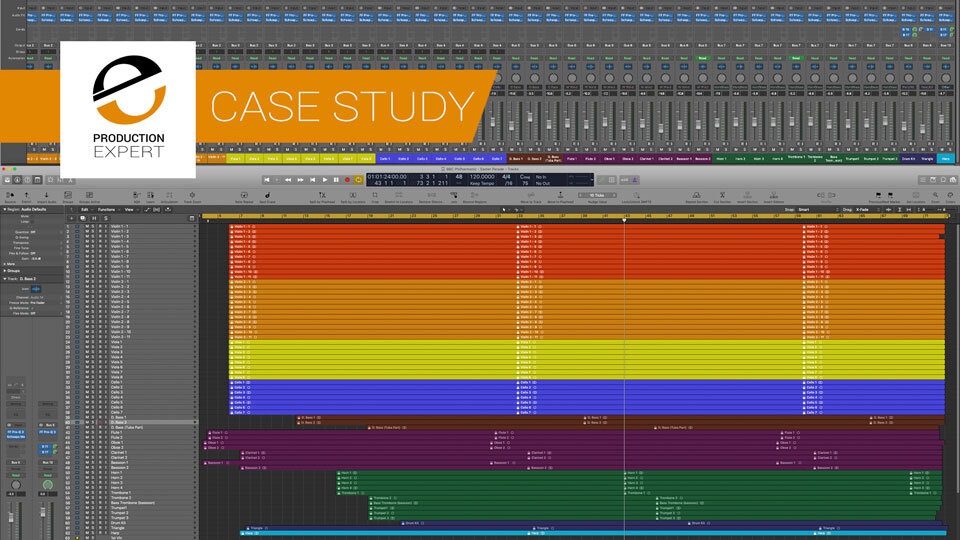

Mix Structure, Bus Routing And VCAs

The Mix Window in Logic Pro X. Click on this image to see a larger version

With a large channel count to deal with, subgroups and VCAs are essential in order to easily dissect the different parts of the orchestra, and with multiple instruments for each part or section, bus processing will be helpful to tame where they sum together. Each section has its own subgroup: Violin 1, Violin 2, Viola, Cello, Double Bass, Woodwinds, Horns & Brass, and Percussion. And each section also has a VCA assigned to the corresponding channels.

Equalisation – In The Traditional Sense

With source material that varies wildly in tone, a good place to start is to equalise the differences between the various recordings. Specifically, not EQ to make these ‘fit in the mix’ but to even out the differences between each violin or each cello so there is a consistent tonal character across the whole section and orchestra. Heavy-handed EQ to make broad tonal changes and surgical cuts to tame small room resonances both help to alleviate the more noticeable outliers and bring them in line with the general trend of the section. Any overall tonal shaping can be done on each section subgroup.

Another aspect to consider here is the difference in frequency content between a close-mic recording and the distant-mic capture of an entire section. Close-mic recordings tend to have much more low-mid and low-frequency content and can accentuate certain harmonic content as well as extraneous noise that wouldn’t be as prominent in a distant-mic recording. Compensating for these considerations using EQ, or removing them with audio repair tools as above, further helps to move our bedroom recordings towards a sound emulating a live orchestra in a concert hall.

Bedroom To Concert Hall

Putting all these different recordings in the same space to create a convincing impression of a concert hall involves thinking about how we hear sound sources in the real world. Sources that are to the left or right of the listener will appear louder in the corresponding ear (which we can easily emulate with pan), however, there are also subtle timing differences in the direct signal. Instruments that are closer appear louder, those that are further away quieter, but distant sound sources will also exhibit a high-frequency roll-off the further away they get. Close sources will have a lower reverb/direct ratio (less wet), but also a longer pre-delay in relation to the direct signal, and the opposite for distant sources.

While hearing a mono signal (or something approximating it) is something that occurs occasionally in the real world (for example our own voice through bone conduction to our ears, or external sources where all frequencies arrive effectively in phase and of equal amplitude at both ears), a mono reverb is a distinctly unnatural event and detracts from any realistic space you’re trying to create. Encoded within the individual mono recordings is not only the direct instrument sound but also the sound of the room it was recorded in. To alleviate the unnatural sound of this and create some space around the instrument you could add a small reverb gently mixed in with the direct signal, but in this case, we want to put all the instruments in the same large hall.

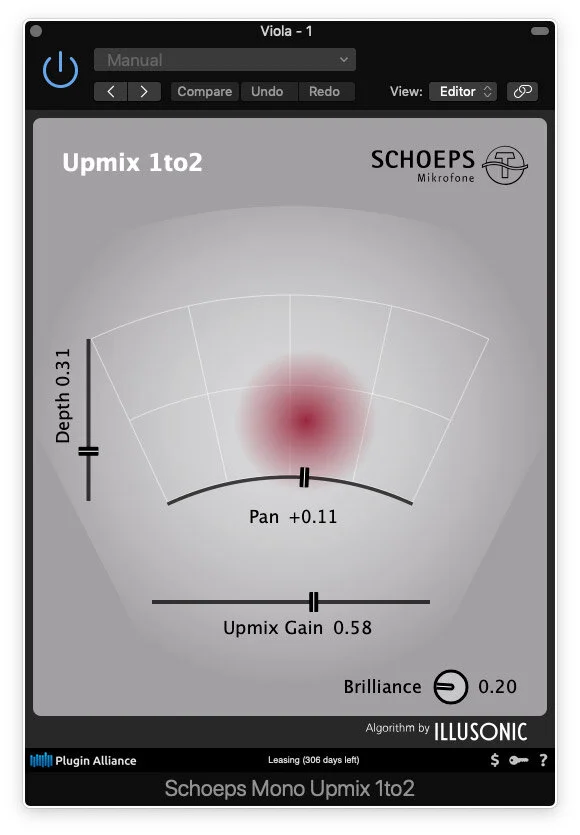

To achieve a more natural stereo sound and not introduce more reverb before our main processing we can use upmixing to convert this mono source to stereo. There are a few different plugins that can achieve this but in testing a few I found that the ‘Schoeps Mono Upmix’ did a great job of extracting the diffuse elements of the signal, creating a natural stereo space around the dry source, and had little effect on the tonal characteristics of the instrument. It’s also processing light so that running 60+ instances didn’t affect performance in any way.

NB. I’m certainly not advocating that mono reverbs don’t have their place in music production, and they can definitely be a useful part of a creative mix, however, in this context we are trying to emulate a real environment as accurately as possible.

Placing the instruments across the stereo field with an orchestra is simple as the layout is already determined. Strings across the front of the stereo field from left to right, Violin 1, Violin 2, Viola, and Cello. Woodwinds are front and centre with Flutes and Oboes in front and Clarinets and Bassoons behind them. At the back of the centre section are Horns, Trumpets, Trombones, and Tuba, and at the rear, left to right, are percussion, timpani, and double bass.

After adjusting the position of the instruments in the stereo field and auditioning all the reverbs I had at my disposal, ‘Fab Filter Pro-R’ was best suited to creating a lush concert hall and has the flexibility to create depth and detail within the reverberant signal. After settling on a general reverb setting I split the reverb into three separate busses (close, mid, and far), duplicating the plugin and settings on each bus, and then adjusting only the pre-delay and close-far settings on each to suit their purpose. When sending the relevant orchestra subgroup to each reverb I adjusted the direct/reverb level of each section to further mimic the effect of their distance from the listener.

To further add to the sense of depth I repeated the same process as above using three instances of ‘UAD Realverb Pro’ setting the plugin to a similar character to Pro-R, but only using the early reflections (all late reverb turned all the way off). The early reflections are timed in the same way, close with longer pre-delay, far with shorter, and mid somewhere in the middle. Subtly blending this in along with the other reverb creates more depth and adds to the sense of realism.

With the reverb taking up a considerable portion of the mix it’s important to pay close attention to how the dry signal is being processed tonally. Placing EQ before the reverb allows you to pull out problematic frequencies that excite the reverb too much and create unwanted resonances while equalising the wet reverb signal then allows you to shape the overall character and blend this with the direct sound more effectively. The mix then becomes a gentle balance between the wet reverb signals, the dry signal from all 60+ instruments, and the tonal balance between them. Sending all the reverbs to one wet bus and all the instrument subgroups to a dry bus allowed me to make subtle EQ changes over each, making this blend as seamless as possible.

Reference Is King Especially When Working In Isolation

As most of us are now working from home or without the equipment we’re used to, it’s even more important to reference other material. During the mix of this project, I selected a set of orchestral recordings from the BBC Philharmonic of similar material and used these as my point of reference to compare the mix and make sure that it approached the aesthetic the orchestra is regarded for.

When used correctly metering is also a useful tool when using headphones or to help compensate for the lack of a well-treated monitoring environment. Personally, I’m a big fan of ‘ADPTR Audio Metric AB’, which combines both in one plugin, allowing for metering and easy AB comparison to reference material, with built-in level matching amongst lots of other features.

Most importantly of all, and especially in a time of isolation, consider sending your mixes to your friends and colleagues to get a second opinion; if there ever was a time for crowdsourcing mix notes it’s now, and have a catch-up while you’re at it.

How To Hear The Result Of All This Work

Here is a clip that the BBC Phil posted on their Facebook page. Because of the way Facebook videos are embedded you may have to click on the video to get it to play.

It was also broadcast in full on Martin Handley’s Breakfast Show on BBC Radio 3 on Sunday 12 April 2020. Because of the way the BBC Website works this will only be available to UK based people and only for a limited period. Scroll to 1:08:30 to listen to Easter Parade in full.

Acknowledgements

I’d like to extend a thank-you to BBC Tonmeister Stephen Rinker for taking time out of his Easter weekend to listen to and approve the mix, John Bradbury for his time and determination to see this project through, Daniel Whibley for the fantastic arrangement, Jennifer Redmond for collating, organising and delivering all the audio from so many players in such a short amount of time, and Simon Webb for taking a chance on this project.